W1

proto

bugs

Arrow

prod?

<100ms

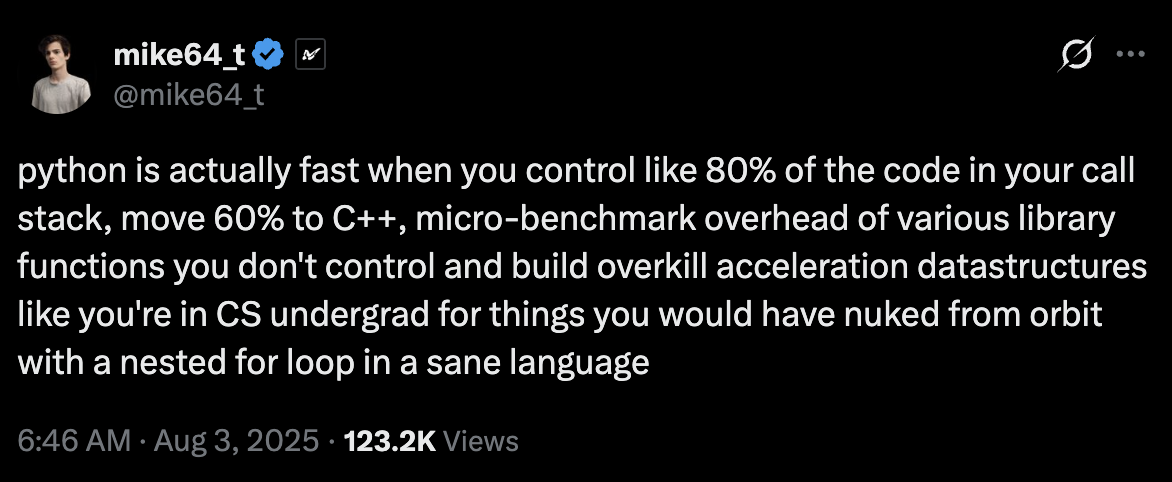

DRW's quant researchers write their code in Python because it's flexible, expressive, and fast to iterate. But Python as everyone knows is notoriously slow.

However:

Someone has to always rewrite the code in C++ to get a decent enough performance to even run a comprehensive backtest.

My moonshot project was to compile Python

Obviously, I am not the first person to try this, and for a few days I read the docs of all major Python compilers and interpreters.

I decided to go with Codon, not because it solved my problem, but because it had pointers, memory management and a half decent C FFI.

Within 2 weeks I had a working prototype interfacing with all the libraries my team wanted, figured out all the interoperability with C++, built some auto-sugar for most the common things QRs use

I even found bugs in Codon and reported them

One of my coworkers said that was the first time he saw an intern debug assembly — apparently Codon has weird struct layout conventions.

I also managed to clobber the stack after passing more than 12 params to a function... later discovering Codon hardcodes function params...

I ended up going very deep into Arrow internals to figure out how to use this janky bootstrapped compiler on top of Codon to interface with Arrow tables with zero copy. #DataMovement

With half the internship time left, I was onto using my compiler in production

I got access to some super spicy secret volatility forecasting algorithm so I had to understand some stats

... Because I wanted to reimplement the algorithm itself not just translate it and compile it

I ended up creating a Dynamic Programming algorithm for this which could run on a circular buffer and make the most use out of caches.

The previous service ran in a couple of hours while mine ran in under 100ms — which enabled me to do many experiments and ended up improving the R² by 0.005 as well

Very simplified, and only for illustration purposes

Both systems start simultaneously. Each processes one year of daily data and returns a volatility forecast. The ball reaches the finish line when the computation completes.

- Python strategy code is parsed to an AST, type-inferred, then lowered to a typed IR. From there, C++ source is emitted and compiled to a native shared library via LLVM. Strategy engineers write ordinary Python; the runtime runs native code.

- A bidirectional interoperability layer allows compiled functions to call back into Python objects — and Python to call compiled functions — without data copying or serialisation. No FFI boilerplate required from the strategy author's side.

- NumPy arrays, Arrow record batches, and Pandas DataFrames are passed across the language boundary as zero-copy views of existing memory buffers.

- The volatility forecasting service backtests a full year of daily closes and produces a forecast in under 100ms. R² improved by 0.005 over the interpreted baseline.